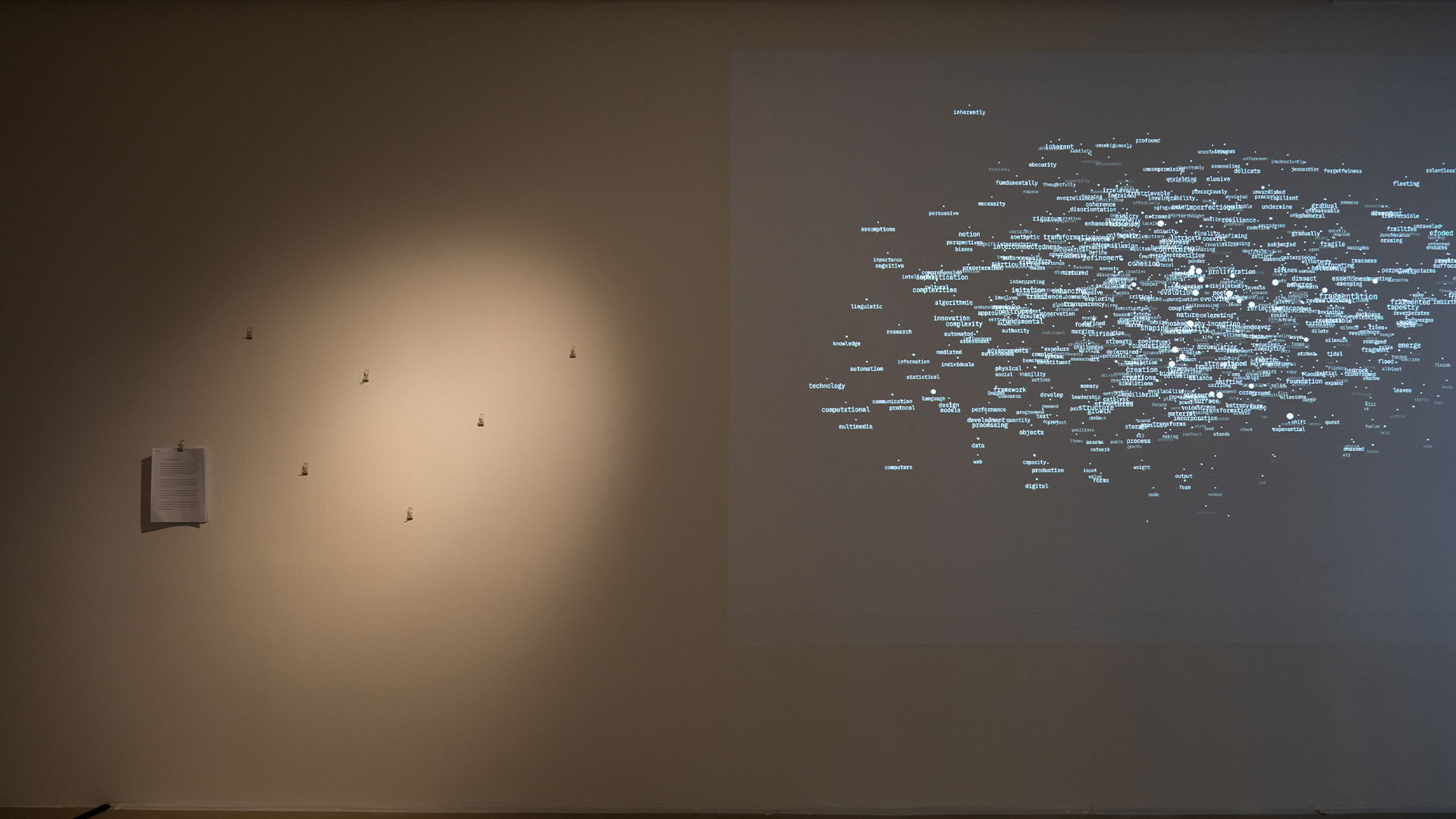

jo+kapi concluded their artist residency with the exhibition 𝚊𝚗 𝚊𝚛𝚛𝚊𝚗𝚐𝚎𝚖𝚎𝚗𝚝 𝚘𝚏/𝚏𝚘𝚛 𝚛𝚊𝚗𝚍𝚘𝚖 𝚠𝚘𝚛𝚍𝚜 that was on view from 4 to 13 April at SMU de Suantio Gallery. During the residency, they developed further their ongoing project Enzyme, an evolving inquiry into creative labour, authorship, and value in algorithmically generated art. Specifically, they began to inquire into how proximity, structure, and interpretation shape meaning, and how we construct and navigate it in the age of generative systems. As part of their exploration, they facilitated a closed-door symposium with invited interlocutors. The conversations, insights, and provocations will shape the direction of future iterations and open new pathways for the project to evolve. SMU Libraries invited writer Ian Tee to document this symposium.

Digestive Aids for Enzyme 2.0

By Ian Tee

On 5 and 6 April 2025, a closed-door symposium was held in conjunction with jo+kapi’s exhibition 𝚊𝚗 𝚊𝚛𝚛𝚊𝚗𝚐𝚎𝚖𝚎𝚗𝚝 𝚘𝚏/𝚏𝚘𝚛 𝚛𝚊𝚗𝚍𝚘𝚖 𝚠𝚘𝚛𝚍𝚜. The two-day programme centred on the role of language and writing in art-making, as well as collaborative brainstorming of ideas that can be presented in the next iteration of Enzyme. Jo and Kapi’s invited interlocutors are a group of artists and writers working with digital platforms: Hilary Yeo, Rafi Abdullah, Aditi Neti, Alysha Chandra, and Seet Yun Teng. I participated as a writer tasked to document the symposium, and through this essay, I hope to unpack some key questions raised as well as my personal reflections. In the two months since, my mind kept returning to the workshops as the discussions about generative AI and its impact on the workplace and education intensified.

Day 1: Unwriting the Manifesto

While the first iteration in the Enzyme series digested images, its successor “turned its attention to language — specifically to the spaces between words”. During the artist talk, Jo and Kapi shared how the series responded to social behaviours spurred by emerging technologies. Enzyme 1.0 questioned one’s relationship with images during the NFT boom in 2020-2022, while 𝐄𝐧𝐳𝐲𝐦𝐞 𝟐.𝟎 ruminates on how large language models (LLMs) impact writing and artmaking. It is a critique on the logic behind predictive text and the notion of language collapse, in the context of LLMs, refers to the degeneration of models when they are trained on data generated by other AI instead of humans.

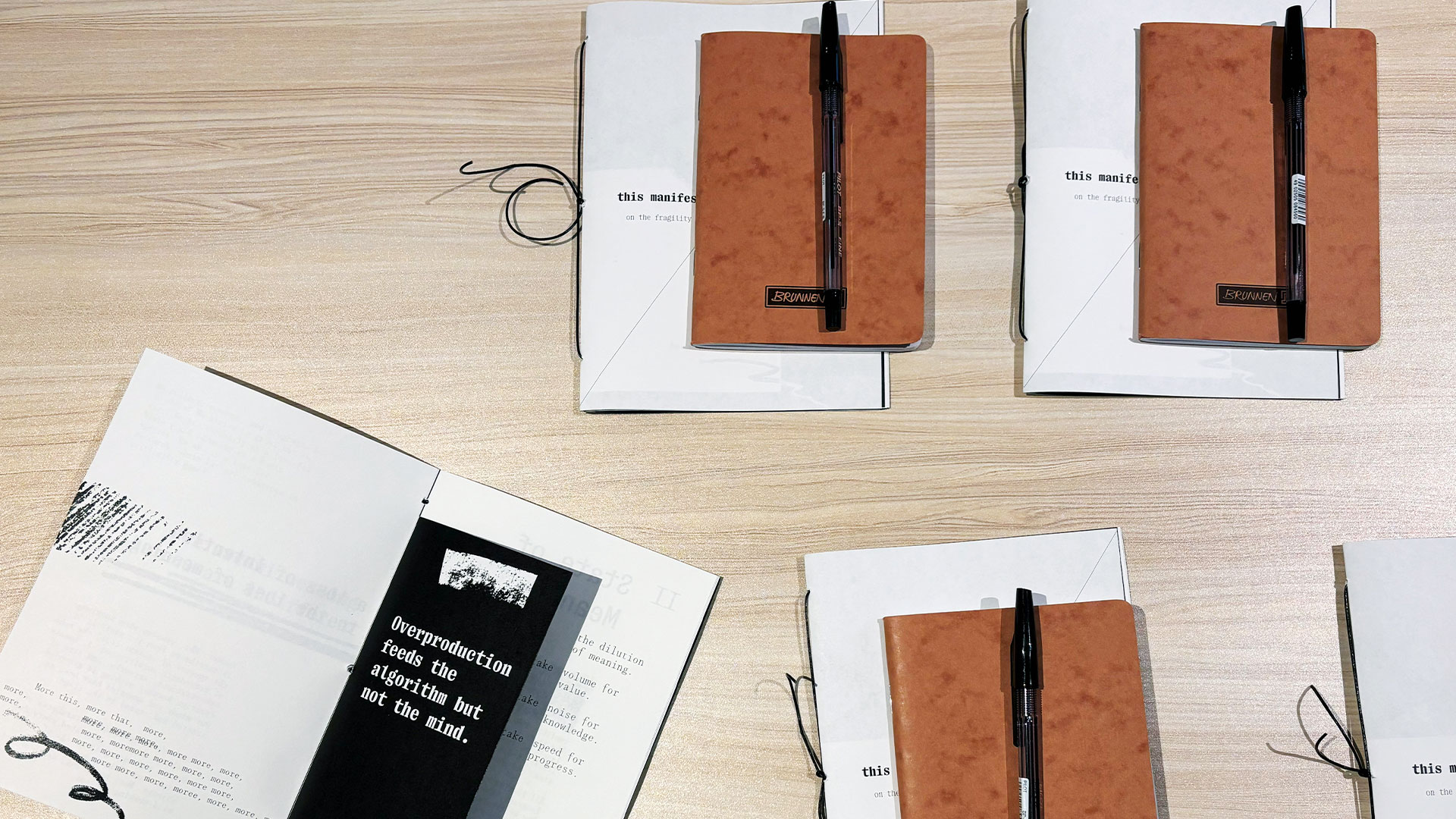

The main activity for Day 1 involved a physical engagement with the manifesto that was fed to 𝐄𝐧𝐳𝐲𝐦𝐞 𝟐.𝟎. The key difference between us and the machine was that taking apart the manifesto in its zine form, added much more complexity to the process. The tactility of paper gave weight to the printed word, while typography and other graphic design features affected the reading experience. The exercise stimulated a discussion on how presentation formats change the way we make meaning from text, providing “data points” currently unavailable to LLMs.

At the same time, conversely, this question also applies to the format of screens or applications and how they influence writing preferences. Does typing on a Word Doc with page breaks signal an expectation of printing the text in standardised formats? What compels a writer to go page-less on a Notes app, or even more radically, write without punctuations? What about old-school journaling on a book? Perhaps it is about structure and flow. My mind went to the analogy of swimming, where pages are akin to swimming laps in a pool, while going page-less is like swimming in the sea. There is a connection between these writing habits and how the writer thinks and strings words together.

The discussion then shifted to the topic of writing with AI assistance, through predictive text functions or generative chatbots such as ChatGPT. An interesting point made was how LLMs, like humans, also have the tendency to display stylistic traits in their responses. The current “tell-tale signs” of AI generated text include certain words, metaphors, and liberal use of syntax features like em dashes. This is accounted for by the LLM’s logic of pattern recognition, which is a framework based on probability rather than semantics. Thus, it is also a false assumption or misplaced expectation that AI functions on communicative intent, and therefore we feel lied to when AI hallucinates.

Day 2: Provocations for Enzyme 2.1

The main programme on Day 2 was a rule-making workshop where we collectively established the conceptual framework that guides a potential Enzyme 2.1. These speculative rules would shape the model’s priorities, ethics, and constraints, which in turn offers a different vision for human-AI relationship. Here, I was reminded of Korean artist Lee Ufan’s comments about AI in an interview on Artforum, published on 22 November 2024:

It is important for artists to raise questions or experience various things. Since AI provides answers, it robs the user of the process, time, experience, everything. I find it problematic because it only pops out the answer and does not possess the various ambiguities, complexities, doubts, etc., that art does. And the source of all that does not lie in people, but in things that transcend humanity, like nature or the cosmos… that is a lot more primordial, profound, and immense.

Are we externalising our thinking or ability to create in the name of convenience when we rely on AI generated outputs? A podcast episode on WIRED, published on 23 May 2025, discussed the issue of ChatGPT and cheating in the classroom and the hosts mentioned a helpful comparison to think about this problem. They compared the impact of generative chatbots in an educational setting to calculators and the Internet, where information is readily available. They concluded that it is closer to calculators in that it provides shortcuts to answers, which could result in the loss of skills over time. Would we lose the ability to conduct research, compare datasets, and summarise texts due to over-reliance on AI outputs?

A list of provocations was raised during the workshop and here are a few to chew on:

- Could the design of AI interfaces go beyond being a productivity tool?

- What does uncertainty look like beyond the impression of answers?

- What if Enzyme 2.1 redacts fact but retains emotions and subjectivity?

- Can AI be an audience?

The last point gets at a core limitation with AI. Currently, it is still unable to generate intent and close the loop of meaning. The human reader remains responsible for interpretative labour, even as generated outputs seem to “create” new metaphors and ideas. Personally, the conversations arising out of this symposium helped me concretise the more abstract concerns around AI. It also laid bare how much of a black box LLMs are. It is fascinating to probe and learn how the model “reads” information differently from the human mind. Yet, even as we study how LLMs work, an even larger scale experiment is happening with the proliferation of this technology. It has implications for the evolution of language, social relations, and creativity. The pandora’s box is open and we do not know its contents.

About the writer

Ian Tee is an artist and writer based in Singapore. His studio practice is concerned with the experience of seeing and how paintings are “read.” It is a method of thinking about formal qualities, abstraction, and their relationship with various cultural contexts. He has been commissioned by Singapore Art Museum’s public art programme and presented works at MAIIAM Contemporary Art Museum, Institute of Contemporary Art Singapore, Grey Projects, among others. Ian is also Editor of Art & Market (A&M), a multimedia platform that features artistic, business, and curatorial practices from Southeast Asia.